I have a confession to make. I used to run random A/B tests simply for the sake of running another test.

I’d read articles like 50 A/B Split Testing Ideas, jot down my favorite ones, and then come into work the next day ready to add a picture background or try a new CTA.

But the problem with running A/B tests this way, is that while you can achieve conversion lifts, they’re usually short lived, and generally can’t be applied to other areas of your funnel.

All you’re left knowing is that a blue background converted better than green on one popup. Or that “Get Started Now” worked better than “Get Started.”

Now I’m not saying that it’s not important to test background colors or CTA text. It most certainly is.

But even more important than the superficial changes you make to your A/B tests is the core reasoning behind why you’re running those tests.

If you’re tired of running one-off experiments that aren’t giving you the widespread results across your entire funnel, then keep reading. In this article I’ll explain how to scientifically run an A/B test using a hypothesis in order to get the most out of your conversion rate optimization.

Let’s get started!

Find out what works for you with Wishpond’s all-in-one A/B testing and marketing tools. Click here to get started.

Become a Scientist

Scientifically conducting A/B tests can be the difference between running a test that proves something, and a running a test that generates a random result.

Take a look at how most marketers are running A/B (or multivariate) tests these days.

Original:

Variation:

In this example both ads are advertising a Sony VAIO laptop. But in the variation, they’re simultaneously testing the CTA, headline, subheadline, and color.

Now imagine that you are on the Sony marketing team at the end of the month.

How can you explain what’s been working in your A/B testing strategy? Do people like a certain color? Can you use the phrase “Double the SSD storage for free” on another one of your campaigns?

No. There’s no real proof that any one thing had any more impact than any other. And (and here’s the clincher) what if one element had a huge positive effect but because of another element’s negative effect the test results were inconclusive?

Don’t run tests like these.

This is why it’s important to develop a solid testing hypothesis before getting started.

What is a Hypothesis?

In science a hypothesis is defined as “a tentative insight into the natural world; a concept that is not yet verified but that if true would explain certain facts or phenomena”.

So what does that mean for marketing?

If you’re running an A/B test that’s testing an underlying hypothesis, if that hypothesis is proved correct, you then have a better understanding of the principles responsible for conversion.

And for this I don’t mean:

- Blue won over green

- The big button converted better than the small button

- “12 Resources For You” beat “12 New Resources” as an email subject line

While these could be the results of an A/B test, they don’t predict or prove anything outside of that specific test. And that’s why it’s important to start with a well thought out hypothesis.

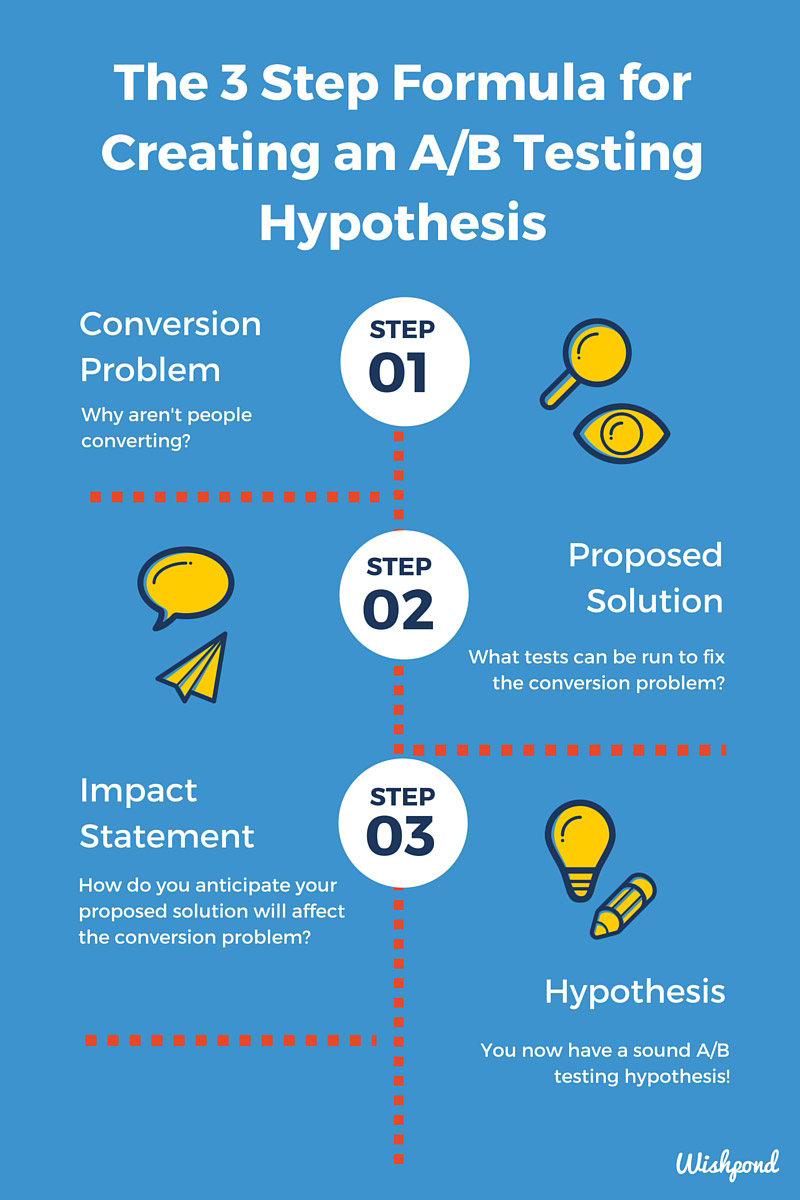

The 3 Step Formula for Creating an A/B Testing Hypothesis

Coming up with a good quality A/B testing hypothesis is actually quite simple. All you need is a:

- Conversion problem

- Proposed solution

- Impact Statement

Step 1: The Conversion Problem

The conversion problem is a statement about why people aren’t currently converting. It requires you to take a look at your page from the perspective of your users. This includes considering the context of your offer, the usability of the page and overall design esthetics. With these factors considered, conversion problems could include:

- It’s not clear where the CTA is

- People don’t know enough about the product to convert

- The page copy isn’t resonating with our audience

- Value hasn’t been communicated adequately

Once you’ve defined why you believe your users aren’t converting (and it’s OK to come up with a list of these ideas), it’s time to move on to proposing a solution.

Step 2: A Proposed Solution

So say your conversion problem is “It’s not clear where the CTA is”. Based on this problem you could come up with a number of proposed solutions such as:

- Enlarging the CTA button

- Changing the CTA color to contrast better or differently with the page background

- Adding a directional cue

- Animating the CTA button

- Changing the page background color

Each of these might look like tests you might have run in the past. The difference is that they are proposed solutions for a specific problem – “It’s not clear where the CTA is.”

Once you have a proposed solution, you can move onto step three to start defining how your solution will fix your conversion problem.

Step 3: Impact Statement

Step three is all about articulating the impact that you predict your proposed solution will have on your conversion problem. Remember this statement should take into account what you will be testing, and how that will positively impact your conversion problem.

Continuing with the same example, if the conversion problem is that:

- “It’s not clear where the CTA is”.

And your proposed solution is:

- “Enlarging the CTA”.

Then your impact statement could be:

- “By enlarging the CTA it will be more clear where the CTA is and users will be more likely to convert”.

In other words, visualizing your impact statement can be seen as:

Proposed solution + conversion problem = impact statement

By following this 3 step process, you can arrive at a clearer understanding of why your traffic isn’t converting, and can develop a clear impact statement to test how to improve.

And what’s even better? Your impact statement is your hypothesis.

That wasn’t so hard now was it?

Wrapping Up

If you’re looking to maximize the impact you’re getting from all of your A/B tests, developing a well crafted hypothesis is the first step.

Being scientific about A/B testing doesn’t have to be difficult either. Remember to work through the 3 step formula to generating an effective hypothesis:

- Conversion problem

- Proposed solution

- Impact statement

With this three step formula in mind, you’ll be armed to create better tests, see better results, and have an overall better understanding of why your A/B tests are winning, and how those principles can be applied to the rest of your marketing campaigns.

Related Reading:

- 7 Reasons Your A/B Split Tests Aren’t Working

- 50 A/B Split Test Conversion Optimization Case Studies

- How to A/B Split Test your Facebook Ads to Maximize ROI

- 15 A/B Testing Stats That Will Blow your Mind

- 10 Easy A/B Tests to Help Increase Conversions

- The Art of A/B Testing – 9 Tests You’ve Never Tried

- The Practical Science of A/B Testing your AdWords